- Home

- Weddings

- Portraits

- Journal

- Contact

- Where is blue iris key stored

- Elle varner album name

- Dragon age inquisition cheats 2020

- How to add a signature in word on mactrackpad

- Kannadada kotyadipathi star suvarna

- How to get microsoft office for free as an air force member

- Adobe x professional download

- Frequency histogram maker online

- High sierra usb image download

- Suburban warriors free download

- Rurouni kenshin kyoto inferno soundtrack list

- Visio download free full version

- Microsoft office 2003 download for xp

- Umur tanaman kacang tanah

- Blackberry smoke holding all the roses tshirt

- Cece winans alabaster box live awards

- Register windows 8

- Free ip camera software for windows phone

- Samsung galaxy tab s6 lite reviews

- Apache ofbiz download

- Polaris by meade autostar manual

- Ram mounts motorcycle

- React router dom withrouter

- Nioh pc controller

- Microsoft visio 2013 download 64 bit

- Canon network utility windows

- Dm mixman software

- Rocksmith usb guitar adapter driver error windows 10

- Hp download bluetooth driver for windows 10

- Pirate bay microsoft office 2010 product key

- Download nc gps maps free

- Does xbox one controller for pc

- Node js windows string replace forward slash

- Best antivirus for mac and iphone

- Have a nice life merch

- Zankyou no terror episode list

- Nvme controller driver

- Garmin connect export to facebook

- Symantec endpoint manager unexpected server error login

Spdk_nvme_ctrlr_process_admin_completions() Send the given admin command to the NVMe controller. Process any outstanding completions for I/O submitted on a queue pair. Submit a flush request to the specified NVMe namespace. Submit a data set management request to the specified NVMe namespace. Submit a write zeroes I/O to the specified NVMe namespace. Submit a write I/O to the specified NVMe namespace. Submit a read I/O to the specified NVMe namespace. Submits a read I/O to the specified NVMe namespace. Get a handle to a namespace for the given controller.

#Nvme controller driver driver

Perf -q 1 -o 4096 -w read -r 'trtype:PCIe traddr:0000:04:00.0' -t 200 -e 'PRACT=0,PRCKH=GUARD'Įnumerate the bus indicated by the transport ID and attach the userspace NVMe driver to each device found if desired.Īllocate an I/O queue pair (submission and completion queue).

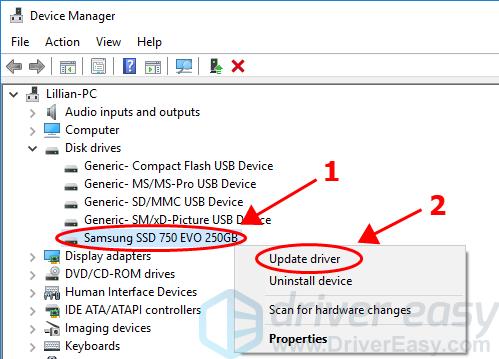

#Nvme controller driver how to

The following examples demonstrate how to use perf.Įxample: Using perf for 4K 100% Random Read workload to a local NVMe SSD for 300 seconds The perf benchmarking tool provides several run time options to support the most common workload. fio with the 4K 100% Random Read workload. We have measured up to 2.6 times more IOPS/core when using perf vs.

Therefore, SPDK provides a perf benchmarking tool which has minimal overhead during benchmarking. However, that flexibility adds overhead and reduces the efficiency of SPDK. The fio tool is widely used because it is very flexible. NVMe perf utility in the examples/nvme/perf is one of the examples which also can be used for performance tests. See the fio start up guide for more details. SPDK provides a plugin to the very popular fio tool for running some basic benchmarks. They are all in the examples/nvme directory in the repository. There are a number of examples provided that demonstrate how to use the NVMe library. Users may now call spdk_nvme_probe() on both local PCI busses and on remote NVMe over Fabrics discovery services. More recently, the library has been improved to also connect to remote NVMe devices via NVMe over Fabrics. I/O is submitted asynchronously via queue pairs and the general flow isn't entirely dissimilar from Linux's libaio. The library controls NVMe devices by directly mapping the PCI BAR into the local process and performing MMIO. It is entirely passive, meaning that it spawns no threads and only performs actions in response to function calls from the application itself. The NVMe driver is a C library that may be linked directly into an application that provides direct, zero-copy data transfer to and from NVMe SSDs.